Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

The RoboNuggets Community

1.2k members • $97/month

Openclaw Labs

1.3k members • Free

Agent Zero

2.5k members • Free

Autonomee

343 members • $97/month

Claude Code Pirates

202 members • Free

Agentic Labs

748 members • $7/m

The Ai Agency - Free Resources

1.3k members • Free

AI Automation Agency PH

2.7k members • Free

AI Automation (A-Z)

151.2k members • Free

9 contributions to Agent Zero

My "perfect" model setup

This setup has been working great for me. The chat and web browser models are both using the Ollama cloud. This is about as good as it gets, I think, without paying the Frontier model pricing. Chat: qwen3.5:cloud Web Browser Model: qwen3-vl:32b-cloud Embedding: sentence-transformers/all-MiniLM-L6-v2

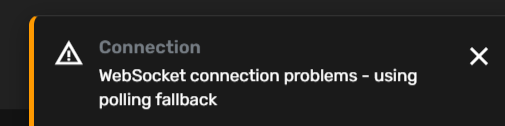

Bug in v1.5 - websocket errors

I keep getting this error after I upgraded to v1.5. Can you share how I might be able to troubleshoot this or is this is a bug in 1.5? I am running on a Hostinger VPS with Dokploy and Ubuntu.

Connecting AgentZero to Signal

Hi guys, I'm just strating with A0 and I wanted to know if there's a way to connected it to the signal cli. Even if it's not a native way inside of agent zero

LM Studio Gurus

Does anyone here happen to be a guru with LM Studio, especially when it comes to optimizing different models? Windows (unfortunately ) with an NVIDIA RTX PRO 4500 Blackwell 32 GB GPU. I want to run the best models I can for A0 locally. It's working but I just have a feeling I could optimize LM Studio more.

Agent Zero Stalling

I have two different instances of A0, one on my desktop and one on a vps. Both are running different models. The desktop is running a local model through Ollama. I see BOTH stalling out and even with nudges, they struggled to move along. Is that normal? I am assuming it is something I am doing.

1-9 of 9

@dale-thomas-9967

AI explorer. Father. BBQ guy. Founder of APIMonitor.io, real-time cost tracking for AI agencies who want margins, not just revenue numbers.

Active 32m ago

Joined Feb 28, 2026

Powered by